Data Product Infrastructure as a Service

Another blog post inspired by a recent conversation with my lovely Norwegian colleagues where I was asked about the creation of a data sandbox to support a customer engagement.

Having done this before it seemed like a good enough reason to share my knowledge and experience with the community in my usual form; what, why and how 🙂

Furthermore, there are many similarities in the creation of a sandbox compared to the ‘data product infrastructure as a service’ concepts included in a Data Mesh architecture.

What is a Data Sandbox?

A data sandbox is an isolated environment created with “real world” data that can be used for, but not limited to, exploration and learning. Isolation of the environment is key, as performing exploration tasks on a production environment where data is refreshed regularly could interfere with the intent or the question(s) requiring an answer. To clarify, we should state that the environment is isolated in terms of access and also that the data becomes isolated, to imply it is now disconnected from any upstream source systems. The data is static, stale, not refreshed. Then, within the sandbox, users have the freedom to change anything. Including the deletion of data if needed to support the objective.

Lastly, in our definition of what a sandbox is, we should accept that data cannot be used outside of the sandbox. With technical guard rails put in place to ensure this doesn’t happen. This can be done in many ways, but to offer a simple technical example, this might include the sandbox being created as an Azure Virtual Machine on a VNet that doesn’t allow any outbound connections, only an inbound RDP session. Maybe extreme, but you get the idea.

In summary, isolation of:

- Data

- Infrastructure

- Access

With an approved purpose and shelf life.

Why Do You Need/Want a Data Sandbox?

There can be many reasons that motivate the need for a data sandbox, here are a few that I’ve encountered to inform the content of this post:

- Performing a discrete audit on data processed.

- Investigating an historic business event that only requires a subset of data in terms of both entities and data duration. For example, only 10 tables from the 50 in the semantic layer and only for the last 6 months of data.

- Training a new team of information analysts without wishing to expose access to the production environment.

- Creating a set of predictions on static data where model training/tuning requires that data doesn’t change overtime.

How Do You Create a Data Sandbox?

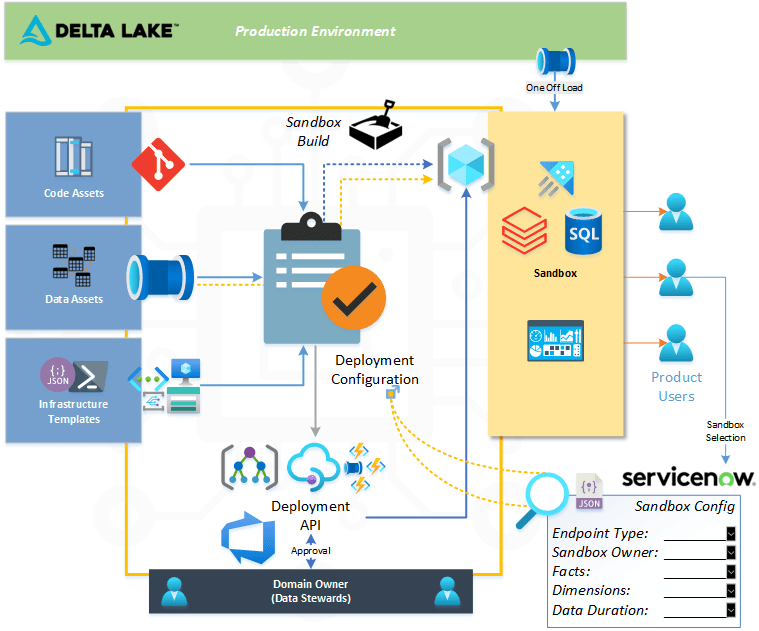

Your technology stack may differ, but in the case of the architectures I’ve worked on for this use case the following picture will help describe the technical approach.

Service Now was the tool used inhouse where a custom form was created allowing the wider business users to define what the sandbox needed to contain in terms of technology and data. The payload from the Service Now form was then passed to an API and used to drive a DevOps pipeline deployment, with support from the internal asset marketplace.

Once the infrastructure deployment was complete for the sandbox a one off load could of data take place to provide all the datasets required.

In addition, the configuration information for the sandbox was stored allowing for re-use and re-build. Given the throw away nature. This was important to avoid another round of configuration.

Governance for the sandbox then becomes very important to avoid another silo of reporting outputs. Therefore, strict policies and approval is needed. With some automation and technical oversight that sets an expiry date for the entire Azure Resource Group. This was handled by tagging in Azure and reporting that prompted the clean-up of expired sandboxes.

I hope you found this helpful.

Many thanks for reading.